The Shift to AI-Native Infrastructure

The first week of April 2026 has solidified a fundamental transition in the digital infrastructure supply chain. We are moving past the "experimental" phase of AI cluster deployment into a regime defined by institutional-grade asset management and rigorous hardware-layer economics. For businesses financing, using, and remarketing GPU-based systems, the primary directive is no longer just procurement: it is the optimization of the total lifecycle value.

This digest synthesizes the critical shifts across institutional capital, physical power constraints, and the emerging fiduciary requirements of hardware security.

1. The Institutional Shift: GPUs as High-Velocity Capital Assets

The sheer scale of recent capital injections: headlined by OpenAI’s $122 billion raise and CoreWeave’s $8.5 billion deal involving Blackstone and Meta: signals that GPU clusters are no longer treated as traditional IT expenses. They have become investment-grade assets with predictable, albeit aggressive, depreciation curves.

The hardware lifecycle for frontier training clusters has compressed to approximately 18 months. As the B200 and subsequent Blackwell-Ultra architectures dominate new deployments, the industry is witnessing a "recovery floor" supported by the technical valuation of the preceding H100 and A100 fleets.

Maintaining this floor requires more than a standard depreciation table; it demands a deep understanding of the GPU industry news and technical specifications that differentiate a high-value compute node from a legacy server. At GPU Resource, we emphasize that technical valuation is the only hedge against the rapid hardware cycles defining the 2026 market.

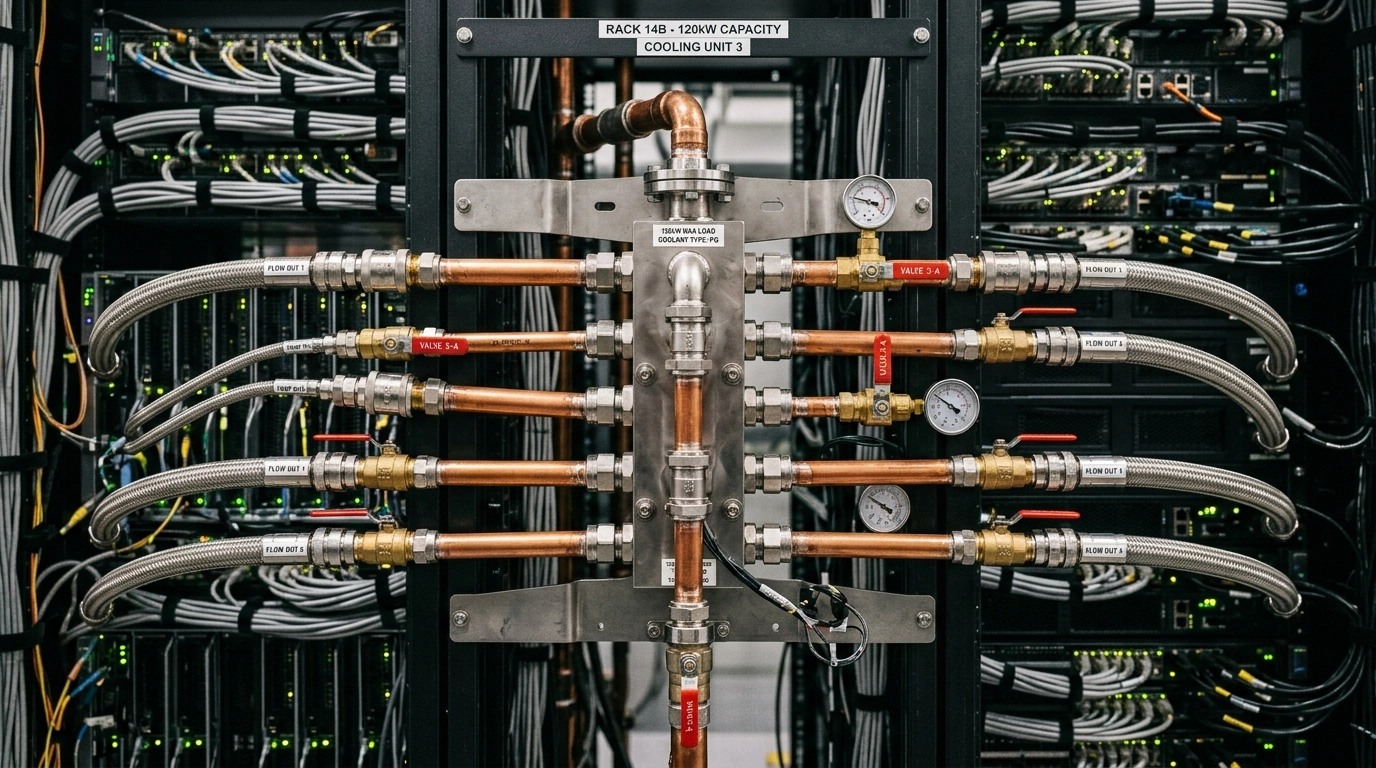

2. Physical Infrastructure: The 120kW "Power Wall"

We are currently observing the "Power Wall" as a primary driver for early cluster decommissioning. In 2024, a 40kW rack was considered high-density; today, the benchmark for B200-class deployments is the 120kW rack.

This escalation has created a massive opportunity cost for data center floor space. Legacy V100 and early A100 clusters, while still computationally functional, are being retired early because they cannot match the performance-per-watt or performance-per-square-foot of modern liquid-cooled infrastructure.

The Networking Multiplier: 800G to 1.6T

While the GPUs remain the headline, the networking stack: including 800G optics and InfiniBand interconnects: now represents 20% to 30% of the total cluster value. As the industry transitions toward 1.6T networking, the secondary market for 800G components has remained remarkably resilient.

For operators, the move to AI-native infrastructure requires a comprehensive fleet refresh assessment. Recovering value from high-speed optics and HSIO (High-Speed I/O) components is no longer optional; it is a critical component of the asset recovery strategy.

3. Security & Fiduciary Duty: Remarketing in a High-Demand Inference Market

A common misconception in the ITAD space is that high-security requirements necessitate the physical destruction of hardware. In the current market, where inference demand for A100s has surged by over 300%, shredding functional GPUs is a violation of fiduciary duty to shareholders.

The A100 remains the "workhorse" of the inference market due to its Multi-Instance GPU (MIG) capabilities and the relatively lower compute requirements of production-scale models compared to frontier training. This demand creates a significant secondary life market, but it must be balanced against stringent security protocols.

Hardware-Level Sanitization

GPU hardware presents unique data persistence risks that traditional drive-wiping tools cannot address. Effective sanitization for the secondary market requires hardware-level intervention across three primary layers:

- VBIOS (Video BIOS): Ensuring no persistent malicious code is embedded in the firmware.

- BMC (Baseboard Management Controller): Sanitizing out-of-band management interfaces that can retain configuration data.

- HBM (High-Bandwidth Memory): Addressing vulnerabilities like "LeftoverLocals" where residual data can persist across process terminations.

Certified hardware-level sanitization, compliant with NIST 800-88 standards, allows for high-value remarketing without compromising institutional security.

Conclusion: Optimizing the Compute Ecosystem

The shift to AI-native infrastructure is a supply-chain challenge disguised as a technological one. Whether you are managing the transition from air-cooled A100s to 120kW liquid-cooled Blackwell clusters or seeking to extract maximum value from a decommissioned networking stack, the technical specifics matter.

GPU Resource provides the industry’s most sophisticated GPU market pulse tool and proprietary valuation methodologies designed to outperform generic ITAD estimates.

Contact the GPU Resource team at info@gpuresource.com for custom pricing requests, buyer/seller connections, or to initiate a comprehensive fleet refresh assessment.

Category: Industry Analysis

Tags: GPU Remarketing, H100, B200, Data Center Infrastructure, 1.6T Networking, Asset Recovery, ITAD.