Inside an Amazon Data Center: The Architecture Powering the World’s Largest AI Clusters

In a previous piece for GPU Resource, we looked at Google’s Ironwood Superpod — a system that directly connects 9,216 TPUs into a single compute domain, operating as one unified parallel processor across 144 liquid-cooled racks. Google’s approach is vertically closed: proprietary silicon, proprietary interconnect, proprietary optical switching fabric, all engineered from the ground up for a single purpose.

Amazon Web Services has taken a different path to the same destination, and understanding the architectural differences matters — both for enterprises evaluating AI infrastructure and for the aftermarket community that will eventually handle this hardware when it comes offline.

AWS builds its own silicon too — but the stack looks different

Like Google, AWS has spent years developing custom chips to reduce its dependence on NVIDIA and optimize for its own workloads. The result is a three-chip family that forms the backbone of every major AWS AI data center today: Trainium for AI acceleration, Graviton for general-purpose CPU compute, and Nitro for networking and virtualization offload.

Graviton5, announced at re:Invent 2025, is now in its fifth generation. With 192 cores per chip and a cache five times larger than its predecessor, it delivers roughly 25% better compute performance than Graviton4. More than half of all new CPU capacity added to AWS is now Graviton-based — a remarkable shift away from x86 that is playing out across every major hyperscaler simultaneously.

Nitro, AWS’s silicon-based hypervisor and networking offload chip, is the less visible but equally important third leg. By moving virtualization and networking functions off the CPU and onto dedicated silicon, Nitro frees Graviton and Trainium to focus entirely on compute workloads — an architectural philosophy that has become a template for how modern hyperscale servers are built.

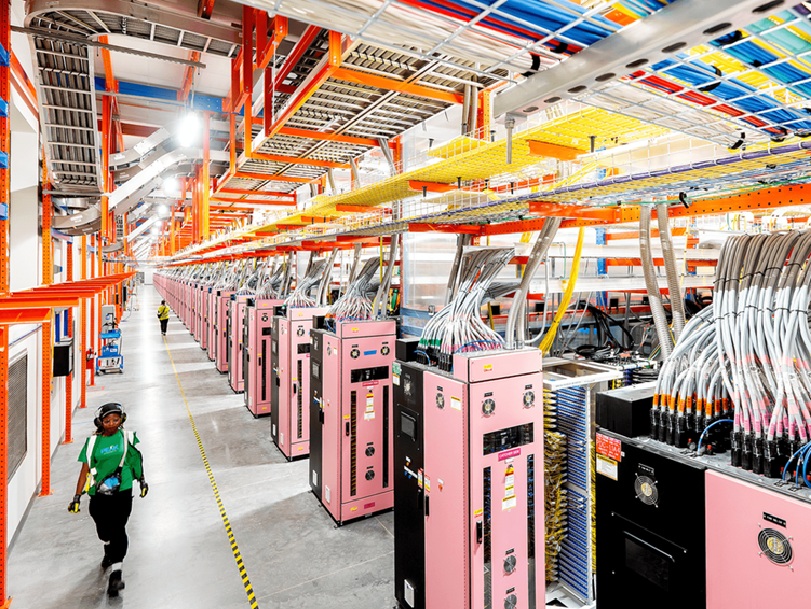

The UltraServer: AWS’s answer to the pod-scale compute problem

A Trainium2 UltraServer isn’t a single server in the traditional sense. It combines four physical servers, each carrying 16 Trainium2 chips, into one integrated system connected by NeuronLink — AWS’s proprietary chip-to-chip interconnect running on copper at roughly two terabytes per second with approximately one microsecond per hop latency. A single fully configured Trainium2 UltraServer houses 64 chips and delivers up to 83.2 FP8 petaflops of compute.

With the Trainium3 generation launched at re:Invent 2025, AWS scaled this further. Trainium3, built on TSMC’s 3nm process, packs 2.52 petaflops of FP8 compute per chip and 144 GB of HBM3e memory with 4.9 TB/s of bandwidth per chip. A fully configured Trainium3 UltraServer connects 144 chips via the new NeuronSwitch-v1 all-to-all fabric — delivering 362 FP8 petaflops total, 20.7 TB of aggregate HBM3e, and 706 TB/s of memory bandwidth in a single system. Energy efficiency improved 40% generation over generation.

Project Rainier: when UltraServers become an UltraCluster

The clearest illustration of what this architecture looks like at hyperscale is Project Rainier — AWS’s largest AI compute deployment, built specifically for Anthropic and its Claude model family. Project Rainier brought nearly 500,000 Trainium2 chips online across multiple U.S. data centers, primarily anchored at a campus in St. Joseph County, Indiana. Anthropic is scaling toward more than one million Trainium chips across training and inference workloads.

Rather than one massive co-located system, Project Rainier spans geographically separated facilities connected by Elastic Fabric Adapter networking. EFA Gen 3 provides tens of petabytes per second of bisection bandwidth and sub-10-microsecond latency between any source and destination pair in the cluster, with real-time path optimization that continuously monitors latency and reroutes traffic if a switch becomes congested or fails.

How AWS compares to Google’s approach

Google’s Ironwood Superpod is a closed system — optical circuit switching, proprietary ICI fabric, and a physical topology that functions as one machine. It is extraordinary in scale-up performance but difficult to partially deploy or incrementally expand.

AWS’s UltraServer and UltraCluster model is modular. NeuronLink handles the scale-up domain within each UltraServer, EFA handles scale-out across the cluster, and the two-tier architecture lets AWS grow incrementally. Trainium4, already in development, is being designed to support NVIDIA’s NVLink Fusion interconnect — a move that would allow Trainium and NVIDIA GPU servers to coexist within common MGX rack designs.

What this means for ITAD and the aftermarket

Trainium hardware is proprietary custom silicon, but the UltraServer architecture is somewhat more modular than a Google Superpod. The four-server building block means individual nodes can potentially be separated and evaluated independently. NeuronLink copper interconnects, while proprietary, are more conventional to handle than optical circuit switching fabric. The EFA networking gear connecting UltraClusters — switches, NICs, cabling — will represent significant secondary market volume as Project Rainier and its successors eventually cycle out.

The HBM3e memory arrays in retired Trainium3 systems will carry the same data destruction complexity as any frontier model training hardware. Certified sanitization, documented chain of custody, and R2v3-compliant processing are not optional for organizations handling this equipment at scale. The ITAD market is projected to grow from $14.6 billion in 2025 to $25.6 billion by 2035, driven largely by accelerating AI hardware refresh cycles. The organizations building expertise in hyperscaler-grade decommissioning now will be the ones positioned to capture that value when it comes.

Sources: AWS, About Amazon, SDxCentral, Data Centre Magazine, Data Center Frontier, AIwire, SemiAnalysis, Arm Newsroom — April 2026